@thrandell and @inq were writing about using neural nets to control a robot.

One task was to allow the robot to move around without hitting obstacles using distance sensors like sonar or time of flight lidar.

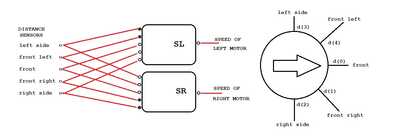

The @thrandell example had a network (actually just two output neurons, a perceptron) with eight sensor inputs and two inputs from two context neurons that held the previous two output values.

The idea was to determine the neuron weights by starting with random sets and then using a genetic algorithm to evolve a set of working weights or to use back propagation to iterate to a set of working weights from an initial random set of values.

I wondered about simply hard coding the weights to see if I could get a set that worked. It gave me insight into the requirements and issues for using distance measures to allow the robot to avoid obstacles.

My thoughts were if the robot was closer to obstacles on the left it needed to turn right and if closer to obstacles on the right it needed to turn left. The sensors needed to have equal but opposite effects on the two motor neurons. The left sensors would try and turn it one way and the right sensors try and turn it the other way with a resultant value that would move the robot to an equal distance from the obstacles on the left and right as the robot moved forward.

The forward sensor value however needed to convert to a top speed output until it approached an obstacle at which time it had to slow down and stop before collision. Thus the speed of the robot would be directly proportional to the distance measure. The side sensors however had to rotate the robot left or right faster as the distance to the obstacle became smaller, the inverse of the requirement for the front sensor. I couldn't figure out how the artificial neuron model would reduce its output value as the input became larger?

You can see the hack for this in the code below.

I made all the weight values the same. Changing this single weight value usually just changed the speed of the robot. This meant the path it followed remained much the same except on occasion when different speeds meant variant values could tweak it in a different path before it again settled on the same path or got stuck.

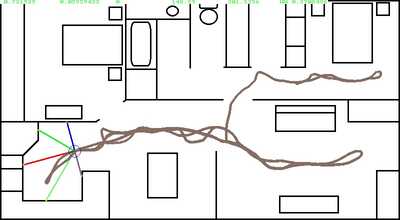

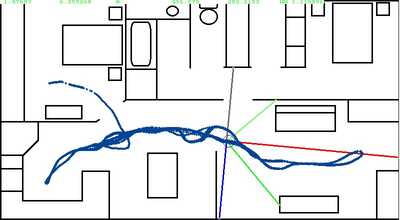

No matter where the simulated robot started it ended up on the same path which makes sense, the path that keeps it an equal distance from sum of all the distance measures to the obstacles. The wiggly path is a reflection of the sum obstacle distances that vary continually with different distances and current direction of the robot as it moves. In a passage it actually moves in a straight line an equal distance from the two wall.

So here is the robot sensor arrangement:

And here is some of the paths it followed each at different starting points (all pointing right to start).

And here is the relevant code for converting sensor values into values to input to each neuron.

for i as single = 0 to 4

'get sensor distance value

distance = shootRay(ox,oy,(sensorAngle(i) + theta ),colors(i))

if i = 0 then 'front sensor

if distance < 20 then

d(i) = 0

else

d(i) = distance/100

end if

else

if distance <> 0 then 'check divide by zero

d(i) = (1/distance)*1000 'invert value as closer = larger

else

d(i) = 0

end if

end if

next i

'compute output value of neuron

SL = d(0) * w + (d(7) * -w + d(6) *-w) + (d(1) * +w + d(2) * +w)

SR = d(0) * w + (d(7) * +w + d(6) *+w) + (d(1) * -w + d(2) * -w)

moveRobot()

Looks like you're making progress!

Your solution reminds me of the Braitenberg vehicles. He described something similar with excitatory and inhibitory connections between the sensors and the motors. The excitatory connections had a positive value and the inhibitory connections a negative value. Your fixed weight value acts like a coefficient in the neuron calculation, but the behavior looks very much like what Braitenberg described.

In the code snippet I see the proportional bit that controls the speed and that d(i) seems to be an array for the sensors, but if there are 5 sensors what are d(6) and d(7)?

By the way, I cracked up when I read: ShootRay( oy )

Tom

To err is human.

To really foul up, use a computer.

I have thought about how to add a light source as a goal to modulate the obstacle avoidance behavior.

The simulator has 8 sensors but I am only using the five sensors and edited the given posted code to reflect that although the actual code still has the 8 sensors. I then forgot to edit the last part!!

'compute output value of neuron SL = d(0) * w + (d(4) * -w + d(3) *-w) + (d(1) * +w + d(2) * +w) SR = d(0) * w + (d(4) * +w + d(3) *+w) + (d(1) * -w + d(2) * -w)

I wrote the shootRay() function to use in a 3d dungeon game with 3d graphics similar to the 3D graphics used in old computer games like Wolfenstein 3D. I have used modified versions of it for other purposes such as the lidar or sonar paths in the robot simulator code. I also used it in a vector graphics drawing program.

However I do have a real robot base with sonar sensors which I have experimented with in terms of obstacle avoidance, navigation and map building but it isn't going to use neural nets as I have no idea what criteria to use to reward the network or even how the network would ever produce the behaviors I want to be rewarded.

The interesting thing about the digger wasp is it flies around its burrow connecting large distant visual features with the burrow's location. It can then find a victim and using that "map" trudge its way back to the burrow along the ground. It doesn't really bother about obstacle avoidance, it climbs over them in one or more straight lines back to the burrow! A wasp has about 4600 neurons, a bee has about 1 million neurons. They both use visual systems.