"ROS is pretty hard to learn" says this guy, James Bruton, and he is pretty up to speed on robotics.

I think a lot of people don't understand what ROS truly is, it's an industrial robotic operating system.

It's not really designed for individuals who are writing their own code and creating their own hardware.

Hobbyist are probably better off writing their own personal version of a robot operating system.

Just my thoughts for whatever they might be worth, I could be wrong.

I think it might make sense depending on what you are building.. I am going to play with it more (been meaning to for a long time and never got around to it).

I think for application like my low level potential fields implementation it is overkill. I am sure they have a potential fields algorithm available, and I am sure it is good, but once you know how write it, you can manage it yourself without the overhead and complexity.

I think though that once you step up to SLAM and beyond there might be serious advantages to ROS. Getting re-involved in this area (When I was a student I was trying to develop SLAM algorithms using just sonar and encoder data, before ROS had any packaged solutions) has me very interested in semantic SLAM which is a current research area and the next logic step. It combines AI and SLAM to give the robot context and enable to identify to interact with particular objects in the environment. It will know rooms, furniture, people, etc. Can follow directions..

I have a feeling to get moving on this before it is a completely solved problem it will likely be smart to use ROS for SLAM.. but will see 🙂

/Brian

... but man ROS is a bit to wrap your head around.

It is something I will not be using in my effort to build a simple but hopefully practical robot. However for students that might get into programming commercial or research robots I would say it would be a requirement.

I have returned to trying to program my Mega based robot and have come to the conclusion it is all too slow. I have to upload the software. Unplug the USB cable. Carry it to somewhere else and then press the go button! Observe the outcome and repeat!! A better solution will be an onboard computer always connected to the Mega that can be controlled remotely by another computer for programming and testing both the higher levels and the Mega at the same time. Not sure if I will use my Linux based RPi or buy a small Windows based laptop to do the job.

I like this idea of using an onboard computer to help in development/testing. Would be nice to see status (print statements) on the remove PC. If you pursue this please make a thread.

What is your program/run cycle? Do you plug your pc into the robot to download a program onto the Mega and then unplug and run it?

I remember @Robo-pi talking about a headless RPi?

Not sure if this is what he meant.

https://www.tomshardware.com/reviews/raspberry-pi-headless-setup-how-to,6028.html

https://www.circuitbasics.com/access-raspberry-pi-desktop-remote-connection/

I assume it can be done with the Jetson?

In my case I will be using a window10 laptop as the main controller on the robot.

https://au.pcmag.com/how-to/47754/how-to-use-microsofts-remote-desktop-connection

For the mega yes. Flash, observe, tweak, repeat.

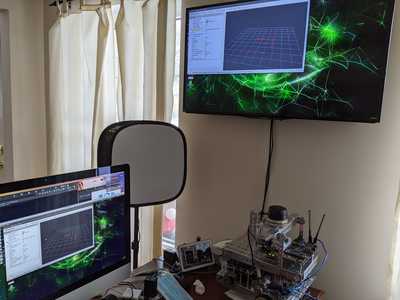

For the Jetson running Ros, I SSH in whenever possible if I'm not using anything graphical. And if I am working with something where I need to observe the UI I use VNC to remotely control from my Mac.

The TV in this image is plugged directly into the HDMI on the Jetson and you can see on the Mac there's also a VNC window open.

/Brian

Acronyms really knock me out as I can never remember what they stand for assuming I ever knew.

SSH and VNC window.

It also wasn't clear from your Flash, observe, tweak, repeat if that involved unplugging the USB between the robot and the PC and placing the robot on the floor, running it, and then picking the robot up again and plugging the USB back for another tweak and download.

Today I used a program called TeamViewer to link the robot laptop to the laptop on my desk. It worked a treat. The robot laptop USB and Mega remains linked. The robot can be anywhere else in the house. Indeed it can be anywhere in the world providing it has an internet connection! I can view what the robot laptop "sees" with its inbuilt webcam and/or other webcams attached to it. I didn't realise it was that easy. That means I can send commands to the robot where ever it is and modify the Mega programs as well as the higher level programs by remote control using another computer.

Working with the Arduino mega I have to be plugged in to flash it. Whether or not I unplug I guess is determined by what I'm testing and the length of my USB cord :). But yes if I unplug then after I observe the robot and tweak code I would have to replug to reflash it.

When I'm working on the Jetson which runs Linux I can remotely access that machine over Wi-Fi similar to what you're doing with team viewer and the two machines. SSH stands for secure shell and it's a remote access method used for Linux terminals. essentially you have terminal access on a remote machine just like you would on a local machine. Again this is great for all text-based commands but there's nothing graphical. When working in Linux this is very often all you need it's efficient and fast. VNC is a lot like team viewer it's just a way to remotely control a graphical user interface like gnome in Linux. I use both of these methods for the Jetson and therefore don't have to plug into it at all.

Hope that helps.

/Brian

Good Luck FYI I'll place a hold on my DB1 - interested to see how you progress. Been following the posts and viewing the Henry IX video ( and his videos ) At this point I don't know where I should be going - a lot of stuff coming at me and I'll try to digest it. If interested FYI- I'll send you two video clips; one I did of ultrasound robot - nothing great just followed a tutorial from Parallax , other Is servo motor hooked up to the sensor like radar . Was going to add the servomotor to the ultrsound robot but never got around to it Keeping , I'm in the loop

Hope that helps

Yes it does, thanks for your patience.

I assume you could use the Jetson the way I use the robot laptop to program and test the Mega via a remote PC. The computers on our unmanned explorer space ships and Mars rovers can be reprogrammed remotely. If you put a robot arm on the robot you could manipulate things outside from the comfort of your home.

I am actually working on this approach now. I have a Jetson Nano 2GB that I will mount to my DB1 chassis and connect to the MEGA. My plan is to test (using VNC) accessing the robot's nano, code using Visual Studio/Platform IO, download to the MEGA, and execute the code.

Hope that helps

Yes it does, thanks for your patience.

I assume you could use the Jetson the way I use the robot laptop to program and test the Mega via a remote PC. The computers on our unmanned explorer space ships and Mars rovers can be reprogrammed remotely. If you put a robot arm on the robot you could manipulate things outside from the comfort of your home.

Yes, I had not really considered that, but I could absolutely setup the arduino dev environment on the jetson, locally connect the arduino and control / flash from my development machine over VNC..

The only gotcha for me is that VNC can be a small bit laggy which is sometimes enough to drive me batty. But I might try this.

/Brian